Publications

Publication list in reversed chronological order.

2026

-

Real Noise Decoupling for Hyperspectral Image DenoisingYingkai Zhang, Tao Zhang, Jing Nie, and Ying FuIn Proc. of Association for the Advancement of Artificial Intelligence (AAAI), 2026

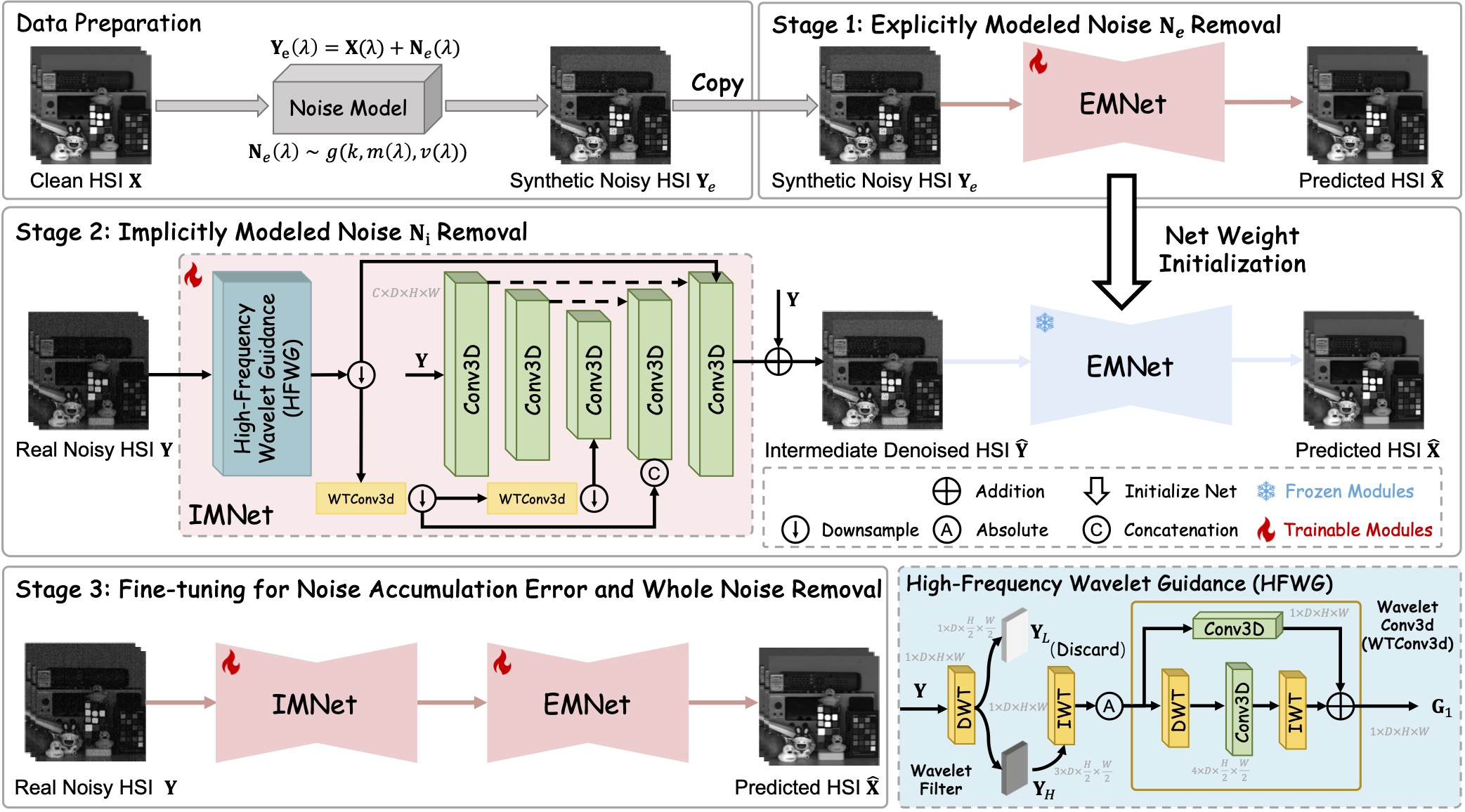

Real Noise Decoupling for Hyperspectral Image DenoisingYingkai Zhang, Tao Zhang, Jing Nie, and Ying FuIn Proc. of Association for the Advancement of Artificial Intelligence (AAAI), 2026Hyperspectral image (HSI) denoising is a crucial step in enhancing the quality of HSIs. Noise modeling methods can fit noise distributions to generate synthetic HSIs to train denoising networks. However, the noise in captured HSIs is usually complex and difficult to model accurately, which significantly limits the effectiveness of these approaches. In this paper, we propose a multi-stage noise-decoupling framework that decomposes complex noise into explicitly modeled and implicitly modeled components. This decoupling reduces the complexity of noise and enhances the learnability of HSI denoising methods when applied to real paired data. Specifically, for explicitly modeled noise, we utilize an existing noise model to generate paired data for pre-training a denoising network, equipping it with prior knowledge to handle the explicitly modeled noise effectively. For implicitly modeled noise, we introduce a high-frequency wavelet guided network. Leveraging the prior knowledge from the pre-trained module, this network adaptively extracts high-frequency features to target and remove the implicitly modeled noise from real paired HSIs. Furthermore, to effectively eliminate all noise components and mitigate error accumulation across stages, a multi-stage learning strategy, comprising separate pre-training and joint fine-tuning, is employed to optimize the entire framework. Extensive experiments on public and our captured datasets demonstrate that our proposed framework outperforms state-of-the-art methods, effectively handling complex real-world noise and significantly enhancing HSI quality.

@inproceedings{zhang2025real, title = {Real Noise Decoupling for Hyperspectral Image Denoising}, author = {Zhang, Yingkai and Zhang, Tao and Nie, Jing and Fu, Ying}, booktitle = {{Proc. of Association for the Advancement of Artificial Intelligence (AAAI)}}, year = {2026}, } -

Supervise-assisted Multi-modality Fusion Diffusion Model for PET RestorationYingkai Zhang*, Shuang Chen*, Ye Tian, Yunyi Gao, and 2 more authors2026

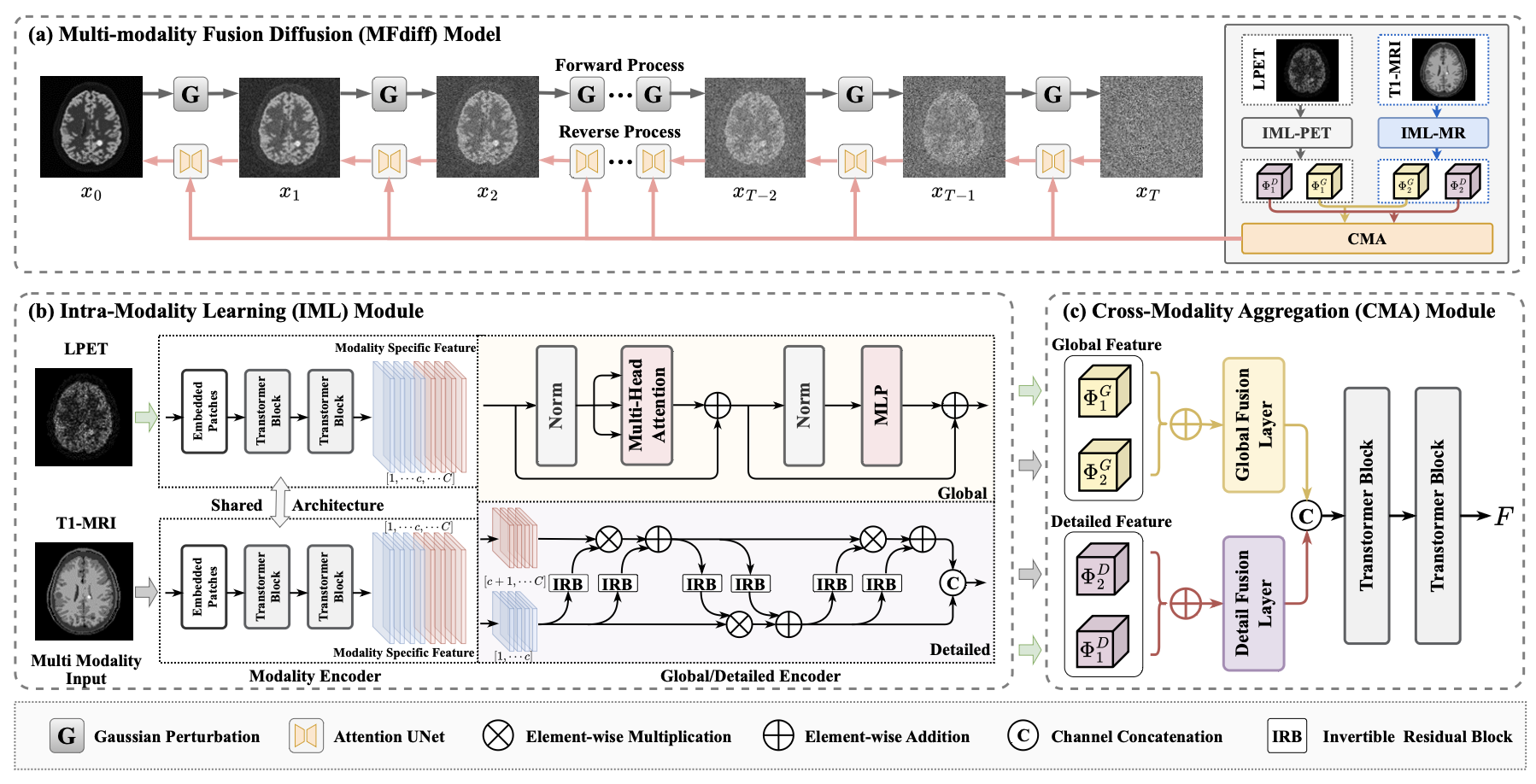

Supervise-assisted Multi-modality Fusion Diffusion Model for PET RestorationYingkai Zhang*, Shuang Chen*, Ye Tian, Yunyi Gao, and 2 more authors2026Positron emission tomography (PET) offers powerful functional imaging but involves radiation exposure. Efforts to reduce this exposure by lowering the radiotracer dose or scan time can degrade image quality. While using magnetic resonance (MR) images with clearer anatomical information to restore standard-dose PET (SPET) from low-dose PET (LPET) is a promising approach, it faces challenges with the inconsistencies in the structure and texture of multi-modality fusion, as well as the mismatch in out-of-distribution (OOD) data. In this paper, we propose a supervise-assisted multi-modality fusion diffusion model (MFdiff) for addressing these challenges for high-quality PET restoration. Firstly, to fully utilize auxiliary MR images without introducing extraneous details in the restored image, a multi-modality feature fusion module is designed to learn an optimized fusion feature. Secondly, using the fusion feature as an additional condition, high-quality SPET images are iteratively generated based on the diffusion model. Furthermore, we introduce a two-stage supervise-assisted learning strategy that harnesses both generalized priors from simulated in-distribution datasets and specific priors tailored to in-vivo OOD data. Experiments demonstrate that the proposed MFdiff effectively restores high-quality SPET images from multi-modality inputs and outperforms state-of-the-art methods both qualitatively and quantitatively.

@misc{zhang2026supervise, title = {Supervise-assisted Multi-modality Fusion Diffusion Model for PET Restoration}, author = {Zhang, Yingkai and Chen, Shuang and Tian, Ye and Gao, Yunyi and Jiang, Jianyong and Fu, Ying}, journal = {{Tsinghua Science and Technology}}, year = {2026}, }

2025

-

Unaligned RGB Guided Hyperspectral Image Super-Resolution with Spatial-Spectral ConcordanceYingkai Zhang, Zeqiang Lai, Tao Zhang, Ying Fu, and 1 more authorInternational Journal of Computer Vision, 2025

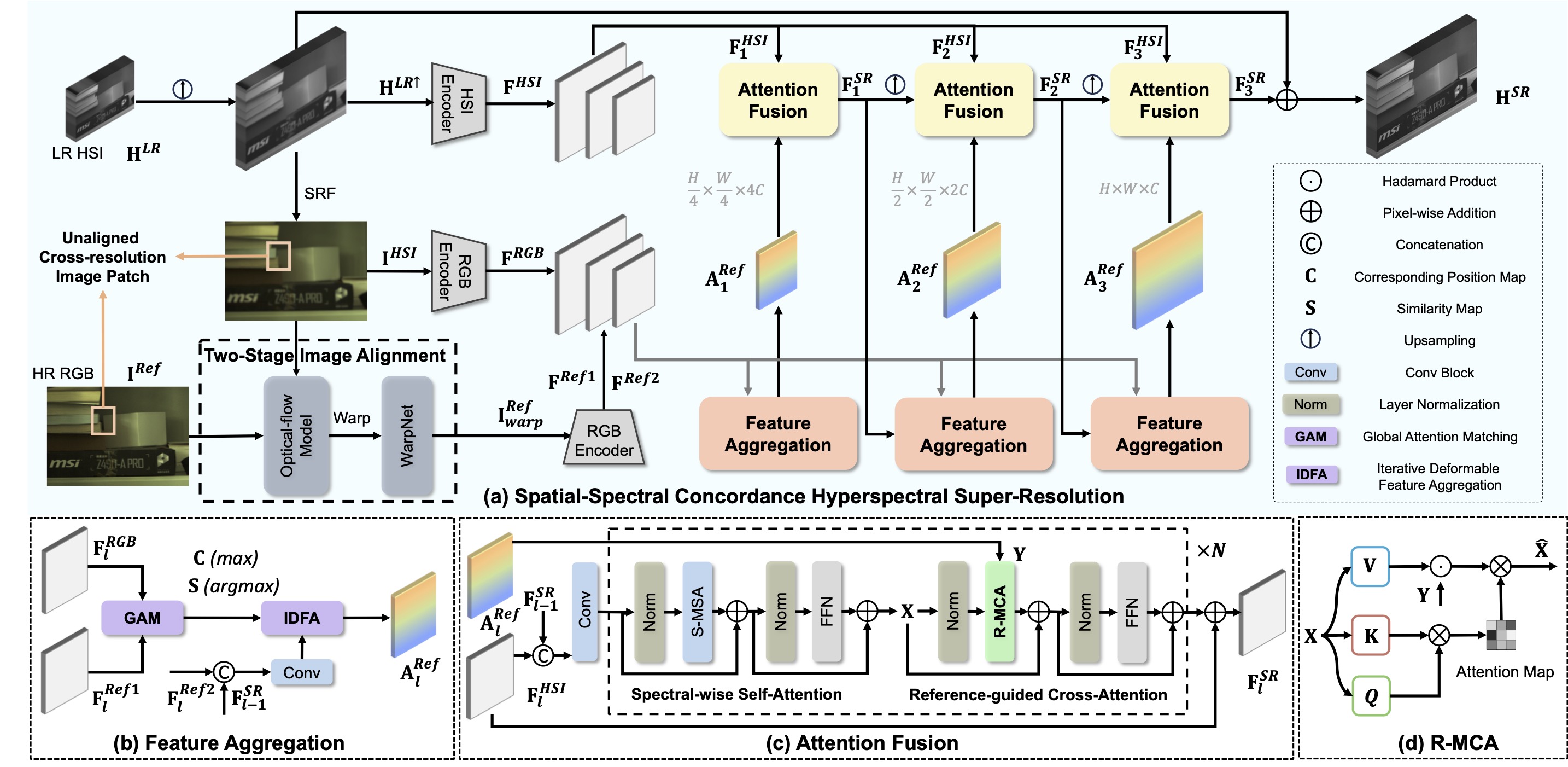

Unaligned RGB Guided Hyperspectral Image Super-Resolution with Spatial-Spectral ConcordanceYingkai Zhang, Zeqiang Lai, Tao Zhang, Ying Fu, and 1 more authorInternational Journal of Computer Vision, 2025Hyperspectral images (HSIs) super-resolution (SR) aims to improve the spatial resolution, yet its performance is often limited at high-resolution ratios. The recent adoption of high-resolution reference images for super-resolution is driven by the poor spatial detail found in low-resolution HSIs, presenting it as a favorable method. However, these approaches cannot effectively utilize information from the reference image, due to the inaccuracy of alignment and its inadequate interaction between alignment and fusion modules. In this paper, we introduce a Spatial-Spectral Concordance Hyperspectral Super-Resolution (SSC-HSR) framework for unaligned reference RGB guided HSI SR to address the issues of inaccurate alignment and poor interactivity of the previous approaches. Specifically, to ensure spatial concordance, i.e., align images more accurately across resolutions and refine textures, we construct a Two-Stage Image Alignment (TSIA) with a synthetic generation pipeline in the image alignment module, where the fine-tuned optical flow model can produce a more accurate optical flow in the first stage and warp model can refine damaged textures in the second stage. To enhance the interaction between alignment and fusion modules and ensure spectral concordance during reconstruction, we propose a Feature Aggregation (FA) module and an Attention Fusion (AF) module. In the feature aggregation module, we introduce an Iterative Deformable Feature Aggregation (IDFA) block to achieve significant feature matching and texture aggregation with the fusion multi-scale results guidance, iteratively generating learnable offset. Besides, we introduce two basic spectral-wise attention blocks in the attention fusion module to model the inter-spectra interactions. Extensive experiments on three natural or remote-sensing datasets show that our method outperforms state-of-the-art approaches on both quantitative and qualitative evaluations. Our code is publicly available to the community (https://github.com/BITYKZhang/SSC-HSR).

@article{zhang2025unaligned, title = {Unaligned RGB Guided Hyperspectral Image Super-Resolution with Spatial-Spectral Concordance}, author = {Zhang, Yingkai and Lai, Zeqiang and Zhang, Tao and Fu, Ying and Zhou, Chenghu}, journal = {{International Journal of Computer Vision}}, volume = {133}, number = {9}, pages = {6590--6610}, year = {2025}, publisher = {Springer} }